The AI-Powered Parent: Prompts for Educational Activities and Managing Family Life

November 13, 2025

AI for Musicians: Generating Lyrics, Chord Progressions, and Marketing Copy

November 20, 2025Introduction: The Context Window Challenge

If you’ve ever worked with large language models like ChatGPT, Claude, or Gemini, you know the frustration: you’re deep into a conversation, the context is rich, and then suddenly the AI “forgets” what was said earlier. That’s because of context window limits — the maximum number of tokens (words + characters) an AI can handle at once. When the limit is exceeded, earlier parts of the conversation get cut off.

For power users and prompt engineers, this isn’t just an annoyance; it’s a productivity bottleneck. Enter AI prompt compression: techniques to fit more meaning into fewer tokens, ensuring you get maximum value from limited space.

Why Prompt Compression Matters

When you compress prompts strategically:

- You save tokens (and reduce costs).

- You keep essential context intact.

- You improve AI accuracy by removing noise.

- You can run longer conversations without hitting walls.

This is especially critical for technical workflows, coding assistance, or research projects where every detail matters.

Core Prompt Compression Techniques

1. Summarization Instead of Raw Copy-Paste

Rather than pasting entire transcripts, compress them into concise summaries.

- Example: Instead of “Here’s a 500-word meeting transcript,” prompt: “Summarize key decisions, action items, and deadlines from this transcript.”

2. Abbreviation and Symbol Systems

Replace long repetitive phrases with shorthand.

- Example: Instead of repeating “Customer Support Representative,” define it as “CSR” at the start of the prompt.

3. Instructional Layering

Nest instructions to avoid repeating them.

- Example: “For each response: 1) Summarize in 2 lines, 2) Add 3 key takeaways.” This reduces the need to remind AI of format repeatedly.

4. Embedding Reference Points

Use numbered tags instead of repeating full text.

- Example: “Refer back to Section [1] for project scope and Section [2] for constraints.”

5. Using Tools Like My Magic Prompt

With My Magic Prompt, you can:

- Auto-generate compressed prompt templates.

- Organize reusable shorthand and reference systems.

- Quickly test compressed vs. expanded prompts side by side.

Practical Examples of Prompt Compression

| Use Case | Long Prompt | Compressed Prompt |

|---|---|---|

| Meeting Notes | “Here’s the transcript. Extract key decisions, action items, and open questions.” | “Summarize transcript: [decisions] [actions] [open Qs].” |

| Coding Help | “Please write a Python function that calculates the Fibonacci sequence up to n and includes error handling for negative inputs.” | “Python fn: Fibonacci up to n, error if n<0.” |

| Content Strategy | “Draft a LinkedIn post about prompt engineering trends in 2025, limit to 200 words, professional but approachable tone, include 3 hashtags.” | “LinkedIn post: prompt eng. 2025, 200w, pro+approach, +3 #.” |

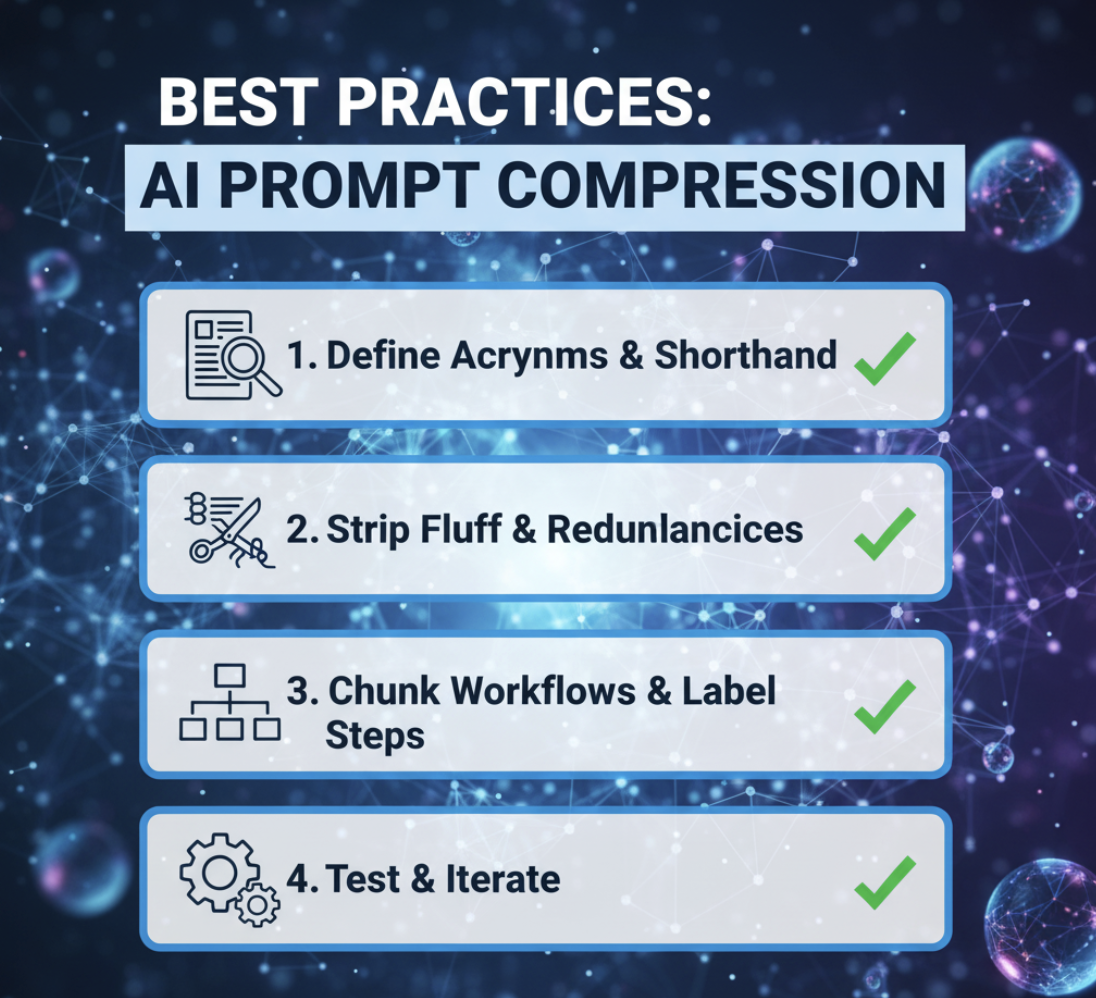

Best Practices for AI Prompt Compression

- Define acronyms early. Reuse shorthand consistently.

- Strip fluff. Remove filler words and redundancies.

- Chunk workflows. Break complex asks into steps with labels.

- Test and iterate. Compare AI output quality between compressed and verbose prompts.

How My Magic Prompt Streamlines Compression

Token budgeting doesn’t need to be manual. With My Magic Prompt’s features:

- Prompt Builder: Craft optimized, reusable templates.

- Prompt Templates Library: Access pre-built compressed formats for coding, research, and workflows.

- AI Toolkit: Test compressed prompts side by side to evaluate performance.

- Magic Prompt Chrome Extension: Generate compressed alternatives instantly as you work in browser tools.

FAQ: AI Prompt Compression

Q1: What’s the biggest mistake in compressing prompts?

Over-compressing — stripping away too much detail, leading to vague or low-quality outputs.

Q2: How much can I realistically compress without losing meaning?

On average, prompts can be reduced 30–50% while retaining key context, especially with abbreviations and summaries.

Q3: Can prompt compression improve AI speed as well as token usage?

Yes. Shorter inputs mean faster processing and less chance of truncation.

Q4: Which AI platforms benefit most from compression?

All LLMs with token caps — especially ChatGPT, Claude, and Gemini — benefit from compressed prompts.

Q5: How can I organize my compressed prompts?

Use a prompt management tool like My Magic Prompt to save, tag, and reuse your best compressed prompts.

Bringing It All Together (Soft CTA)

AI context windows may be limited, but your creativity doesn’t have to be. With AI prompt compression, you can maximize meaning, save tokens, and push the boundaries of what’s possible inside limited space.

Ready to level up? Explore prompt templates, compression tools, and workflows with My Magic Prompt.